Module 1-1: Large Language Models

This course is all about AI, but what exactly do we mean when we say that? There's a lot of ways you can interpret it. So, let's spend a little time getting to know ChatGPT and what kind of AI system it is.

What is Artificial Intelligence?

Artificial intelligence is a field of computer science dedicated to making computers act and think like humans do. Typically, computers are programmed to only perform very specific tasks, most of which involve math and logic. AI is a way to make computers perform tasks that are more like human behavior. That's the technical definition, at least, but "AI," however, in a marketing sense, is a really vague term, because it means entirely different kinds of computing to various people. It's important for us to distinguish between different kinds of AI. Let's give a few examples.

Hardware

To some companies, "AI" is a catch-all term to use on products that use software to do something well. For example, an "AI Rice Cooker" won an iF Design Award in 2022, and uses sensors and motors to make the perfect rice for you. But it's a bit of a stretch to say it "uses AI." It just cooks rice. Come on now.

Machine Learning

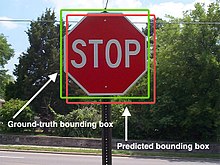

Machine learning is a kind of program where a computer learns how to do something like a human would, by repeatedly practicing, failing at, and getting better at a particular task. Many of our software today relies on machine learning. One of the most common applications is for computers to be able to analyze a picture and determine things objects in a scene.

However, for this course, we're not interested in this kind of "AI" either. Not because it's not important, but because designing machine learning models is always going to involve a lot of trial and error, and an incredibly large amount of data. Unless you are a professional researcher or a company, this is not something you can do on your own.

Large Language Models

Here we are! LLMs, or Large Language Models, are a kind of machine learning model that is already trained on a colossal amount of data, and are able to create outputs tailored to questions that resemble natural languages. In other words, they are programs that output human speech.

This kind of AI is the main focus of this course due to its familiarity and ease of use. You already know at least one LLM: ChatGPT. Many other programmers use other LLMs, too, like Google's Gemini and Anthropic's Claude. More models keep coming out all the time, and they all have their own unique features and capabilities. Generally, though, most of these LLMs act identically in terms of functionality. Because all you need to do to use an LLM is send it a plain-English question, LLMs are very well suited for new designers and developers to use.

How do Large Language Models Work?

As stated before, ChatGPT is a large language model. Large Language Models, or LLMs, are machine learning models trained on a gigantic amount of information, that can talk and respond to your questions like humans.

Large language models are really complicated, but it's useful for you to know at least how they work. ChatGPT is not magic, and it's not sentient, it's just an algorithm.

ChatGPT is Just an Algorithm

Algorithms are programs that take in an input, and generate a specific output. In the case of ChatGPT, this input is called a prompt. An example of a prompt is something like "What's 2 + 4?" or "Explain to me how taxes work."

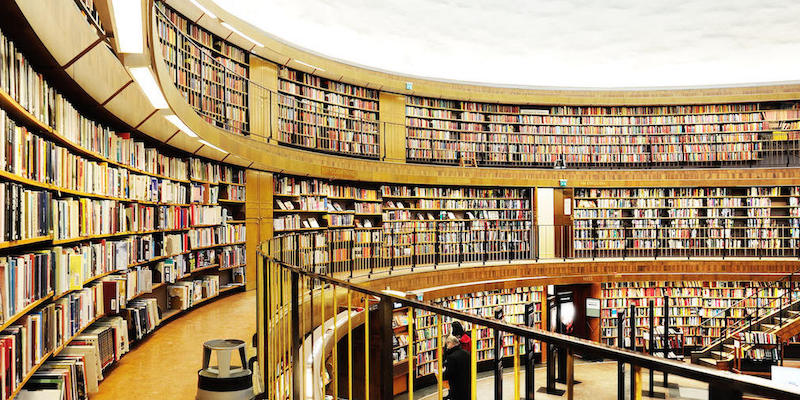

1. The Dataset

Large language models are commonly trained on the Internet. The Internet is incredibly large, and filled with terabytes of information. News articles, blog posts, social media posts, and more are all included.

You can think of a LLM like ChatGPT as having access to a gigantic library, that's filled with all sorts of books.

2. What Are You Saying?

The library that ChatGPT has access to is just too big. When you ask ChatGPT a question, it needs to find out where to look to get your answer for you.

But ChatGPT is a computer, and computers don't understand natural languages, such as English or Chinese. Instead, they need to turn your question into numbers that it can do calculations with. Though it's a lot more complicated than we just explained, this is called natural language processing, or NLP.

Once you ask ChatGPT a question, it will use NLP to pinpoint the right book in the library that is related to your question.

3. Building the Answer

Once ChatGPT has found the right book in the library to start with, it can start generating your answer. The "book" in this dataset is really just a word or a phrase. Imagine that it writes down this phrase on a sheet of paper.

But it's answer is just one word so far. ChatGPT needs to read more than one book in the library to get your full answer, so it takes its answer so far and uses NLP on it to find the next word.

ChatGPT repeats this process of reading books and finding the next one until it reaches an answer of satisfactory length. Then, it gives you back the piece of paper with the answer on it.

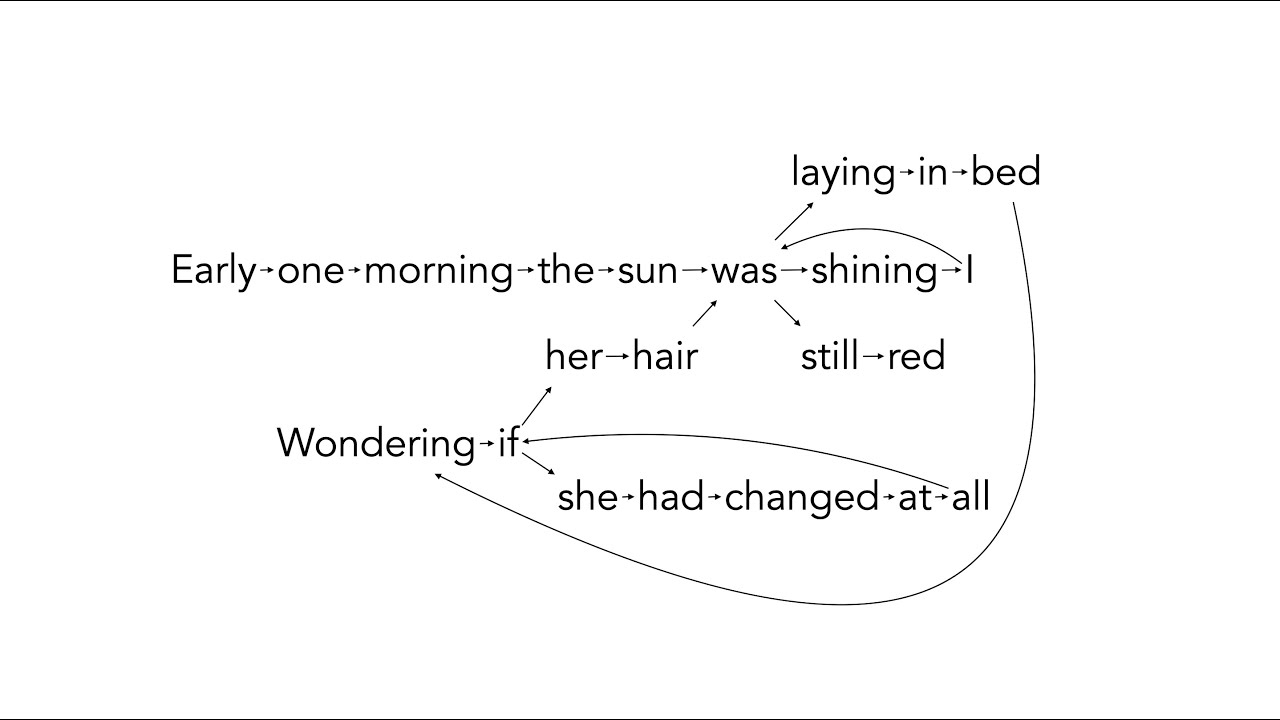

This process of building your answer can vary since LLMs are randomized. For example, here are several ways in which it can generate a response.

ChatGPT is not Magic

The library analogy for ChatGPT demonstrates one central concept: ChatGPT is not magic. It works by taking a word and then finding out the most relevant word to put after it, and so on until it forms a sentence. All LLMs work this way. Some do this better than others.

ChatGPT does not inherently know math or science or what the plot to the latest Avengers movie is. Imagine in this scenario that you are the librarian that answers people's questions. You don't need to know any of the books in your library. If you follow the same steps that we outline here, you would be giving out nearly the same responses that ChatGPT does.